Why and how to use Docker for deploying your Machine Learning applications? Let's say you are building a Machine Learning web application with a multi-disciplinary data team. Every team member is using a local development environment. How do you ensure that the ML application will act consistently as expected within different environments? Something that worked on your machine may not work on the machine of your team member, let alone the production server. Your laptop may have a specific operating system (OS) and a specific python runtime. And you may be using a specific version of the libraries and frameworks and require other dependencies. The solution? Isolate your application with containers. How? Enter Docker, the number one tool for containerization software.

9.1 First of all, what are containers?

Before we dive into the wonderful world of Docker, let's first investigate the concept of containers. A container is a standardized software component that contains all of the necessary elements to run in any environment. In this way, containers virtualize the OS and can run anywhere, from private data center to the public cloud or even a personal laptop. This ensures that an application will run swiftly and consistenly in different computer environments. The development team can thus move fast, deploy software efficiently, and operate at scale.

A container contains the following elements:

- code for the application;

- runtime for the application tools;

- libraries and frameworks;

- configuration settings.

If you want to read more about containers and their benefits, check out this guide.

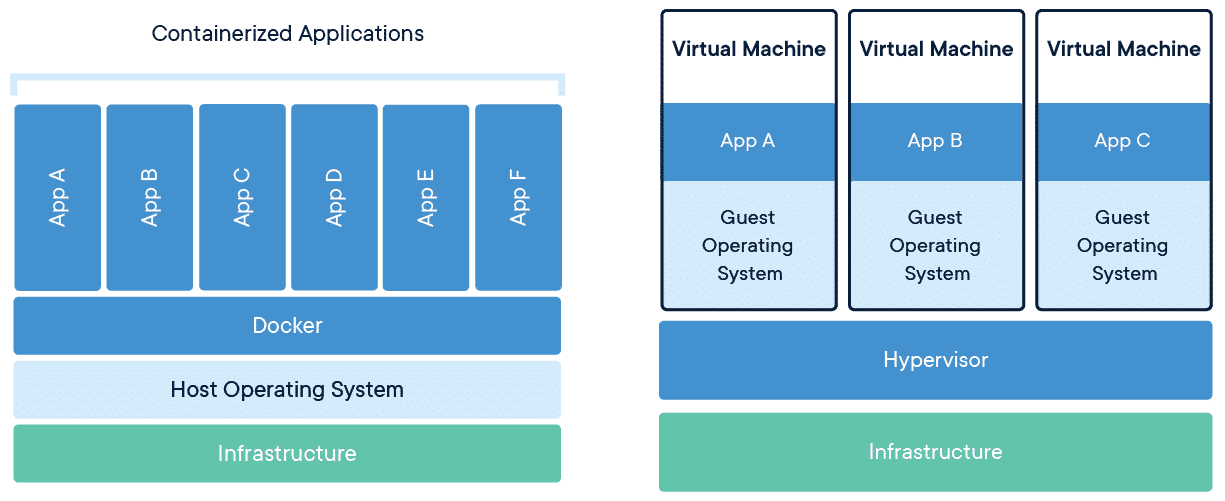

Containers are often compared to virtual machines (VMs). They both allow you to package your application together with libraries and other dependencies in isolated environments. However, containers are more lightweight, virtualize at the OS level, use far less memory, and are easily customizable. See the diagram below for the differences in architecture between VMs and containers:

Read more about containers vs. VMs here.

9.2 What is Docker?

Now we know what containers are, let's take a look at Docker. Docker is an open-source containerization platform, developed by Docker, Inc. It was initially released in 2013 and is written in the Go programming language.

Docker allows you to easily deploy your application in a container to run on a host operating system. With Docker, all the required components of the software (stated above) are specified in Docker configuration files (e.g. a Dockerfile). And if something is specified to this configuration, it is automatically added for every developer working on the project.

How does Docker work under the hood? Let's take a look at Docker's architectural components.

- Docker Client - The command line tool that allows the user to interact with the daemon. More generally, there can be other forms of clients too - such as Kitematic which provide a GUI to the users.

- Docker Daemon - The background service running on the host that manages building, running and distributing Docker containers. The daemon is the process that runs in the operating system which clients talk to.

- Images - The blueprints of our application which form the basis of containers.

- Containers - Created from Docker images and run the actual application.

- Docker Hub - A registry of Docker images. You can think of the registry as a directory of all available Docker images that you can pull and add to your Dockerfile. If required, one can host their own Docker registries and can use them for pulling images.

Docker uses a client-server architecture. The Docker client talks to the Docker daemon, which does the heavy lifting of building, running, and distributing your Docker containers. The Docker client and daemon can run on the same system, or you can connect a Docker client to a remote Docker daemon. The Docker client and daemon communicate using a REST API, over UNIX sockets or a network interface.

9.3 Why should you use Docker?

Here are some of the main advantages of using Docker:

- While working in a team, you don't need to worry about the different members having different versions of programming language, libraries, etc. This means: fast deployment and migrations.

- It saves hours in patching and downtime when compared to virtualization. It enables flexible resource sharing.

- Docker enables you to scale your application.

- A lot of plugins are available to enhance its features. Docker Hub contains pre-built images for a wide variety of use-cases.

- It saves storage space.

A lot of advantages as you can see! However, not all applications benefit from containers and it can take some time to get a good grasp of Docker. Although there is a learning curve when you integrate Docker for the first time, it is definitely worth it! To ease the learning curve, you could try following tutorials from Docker's own learning resources which you can find here.

9.4 How to use Docker?

Docker images

Docker images are created based on the list of commands that you configure in your Dockerfile, which are called by the Docker client. It's a simple way to automate the image creation process. The best part is that the commands you write in a Dockerfile are almost identical to their equivalent Linux commands. This means you don't really have to learn new syntax to create your own dockerfiles.

Each instruction in a Dockerfile results in a layer. And each layer is an image itself, just one without a human-assigned tag, and stores the changes compared to the image it’s based on. Read more about Docker layers here.

Some tips when building the Dockerfile:

- Minimize the number of layers.

- Don’t install unnecessary packages.

- When installing packages, always combine RUN apt-get update with apt-get install in the same RUN statement.

- Python libraries should be placed in a requirements.txt file instead of being installed explicitly in the Dockerfile steps.

You can find a cheatsheet for Dockerfile commands here and some best practices for writing Dockerfiles here.

You can build the Docker image by running docker build -t <image_name> . in the command line. The -t flag is used to give your image a name tag which you can use to retrieve the image later on and the . lets your machine look for file named 'Dockerfile' in the current directory. Here you can find documentation on the docker build commands and all its possible flags.

Docker containers

You can create and start a new container from a Docker image by running docker run <image_name> in the command line. With the docker run command, the image that is built according to all instructions from your Dockerfile except the CMD command is picked up. The CMD command is used to specify the default command that should be run when a container is created from the image defined in the Dockerfile. More information on the docker run command can be found here.

When using passwords and other confidential information, it is recommended to put this information in a .env file. You can invoke this file in the docker run command by extending it with --env-file=.env.

Tip: instead of executing the

docker buildanddocker runcommands sequentially and manually via the terminal, you can also add them to a seperate bash / MAKE file and then only execute this file.

Storage

When a Docker container is destroyed, a new container is created out of the existing Docker image. No changes are made to the original container. Therefore, you’ll lose data any time you destroy one container and create a new one. To avoid losing data, Docker provides volumes and bind mounts, two mechanisms for persisting data in your Docker container.

- Bind mounts: Bind mounts have been available in Docker since its earliest days for data persisting. Bind mounts will mount a file or directory on to your container from your host machine, which you can then reference via its absolute path. Example:

--v "${pwd}":/var/lib/postgresql/data. - Volumes: Volumes are a great mechanism for adding a data persisting layer in your Docker containers, especially for a situation where you need to persist data after shutting down your containers. Docker volumes are completely handled by Docker itself and therefore independent of both your directory structure and the OS of the host machine. When you use a volume, a new directory is created within Docker’s storage directory on the host machine, and Docker manages that directory’s contents. Example:

--v $HOME/docker/volumes/postgres:/var/lib/postgresql/data.

Read more about storage in containers here.

Ports

If you want to share your model's predictions with others, you need to make ports available. By default, when you create or run a container, it does not publish any of its ports to the outside world. To make a port available to services outside of Docker, or to Docker containers which are not connected to the container’s network, use the --publish or -p flag. This creates a firewall rule which maps a container port to a port on the Docker host to the outside world.

Read more about Docker networking here.

9.5 Advanced topics

If you want to add more components to your application stack, such as a MySQL database or an API, then you need multiple containers, since each container should do one thing and do it well. There are multiple tools for defining and running multi-container Docker applications, which will be discussed below.

Docker-compose is designed for running multiple containers as a single service. It does so by running each container in isolation but allowing the containers to interact with one another. You would write the compose environments using YAML. You can allow containers to talk to each other through a network.

Kubernetes is a portable, extensible, open source platform for managing containerized workloads and services, that facilitates both declarative configuration and automation. It has a large, rapidly growing ecosystem. Kubernetes services, support, and tools are widely available.

Docker-compose and Kubernetes are out of scope for this chapter. However, you can learn more about Docker-compose here and more about Kubernetes here.

Assignment

First, download and install Docker desktop:- Mac: execute

brew install docker --caskvia the terminal. - Windows: https://docs.docker.com/desktop/windows/install/

- Create a Dockerfile that:

- Installs all the required packages using a

requirements.txtfile. - Runs your FastAPI app locally on your computer when you run the docker image

- You can consult this guide for pointers on how to do it!

- Installs all the required packages using a

Tip: Docker caches the layers of your Docker image top-down. If the content of layers does not change, it loads a cached layer. Therefore, the order of commands in your Dockerfile matters! To avoid that your requirements have to be reinstalled every time you change something in your python script and run a

docker build, copy the requirements.txt separately before installing the requirements and only then copy your application code to the image.