The headline and subheader tells us what you're offering, and the form header closes the deal. Over here you can explain why your offer is so great it's worth filling out a form for.

Remember:

This blog is the first in a series of three in which we walk you through a recent project we did on predicting house prices. In this case study we will discuss the entire process: from model development to implementation. Importantly, although we use house-price prediction as an example, the take-away messages will be relevant for any machine learning project, irrespective of the domain.

Predicting house prices is a well-known data science problem. Not only is it one of the ‘get started’ cases on the competition website Kaggle, it is also a popular use case for blogs on machine learning techniques. The typical case goes as follows: we have information about different characteristics of houses, such as square footage, number of rooms, and number of bathrooms (often incomplete). For some houses we know the house price and we want to predict the price of the other houses. Due to the concreteness and simplicity of the problem it lends itself well to explain basic concepts like imputation, transformation of variables, and model evaluation. We had the chance to bring this textbook use case to practice, where we did not only get access to data on the physical attributes of houses, but also to data on attributes related to the location of the house, such as the geographical coordinates and its distance to facilities. Unlike the textbook example the data includes houses that are spread over a large and diverse geographical area and contains quite some noise.

Huispedia is a new large Dutch house platform that aims to make it easier for individuals to sell their houses themselves. Core to this concept is an accurate prediction of a property its value. But rather than letting a realtor determine a good price, a model provides an indication of a properties’ value. This model should take into account all the relevant information of the house such as accessibility and location.

When we stepped into the project, the platform was already showing predicted house prices to their users. These prices were based on a complex set of business rules. This rule-based algorithm selects all houses with known historic house prices in the vicinity of the selected house. Next it scores the houses on similarity to the selected house in terms of square footage and the type of the house. Finally, it selects the houses with the highest scores, runs a linear regression on those observations, and derives the house price of the selected house. The rules and parameters where established based on business logic and continuously tweaked based on user feedback. As a result the algorithm worked quite well, especially for houses in densely populated areas with many similar houses in the neighborhood. However, when trying to derive house prices for houses in more sparsely populated areas or with less frequent characteristics the algorithm had a hard time coming up with a sensible house price.

As an alternative to this complicated set of business rules, we proposed to use a tree-based machine learning method. In principle, such methods could result in a similar type of solution: a tree-based algorithm could start with splits based on geo-location, therefore cutting the country up in blocks, so that all houses located close together fall in the same bucket, and then splitting further based on type, size and number of rooms. However, it can also yield very different solutions, with a less prominent role for location, so that similar types of houses on opposite sides of the country can also be grouped together and information on the prices of those houses can be used to predict house prices of houses in less populated regions. Therefore, the machine-learning approach is likely to outperform the rule-based algorithm; especially when dealing with houses other than your typical city apartment.

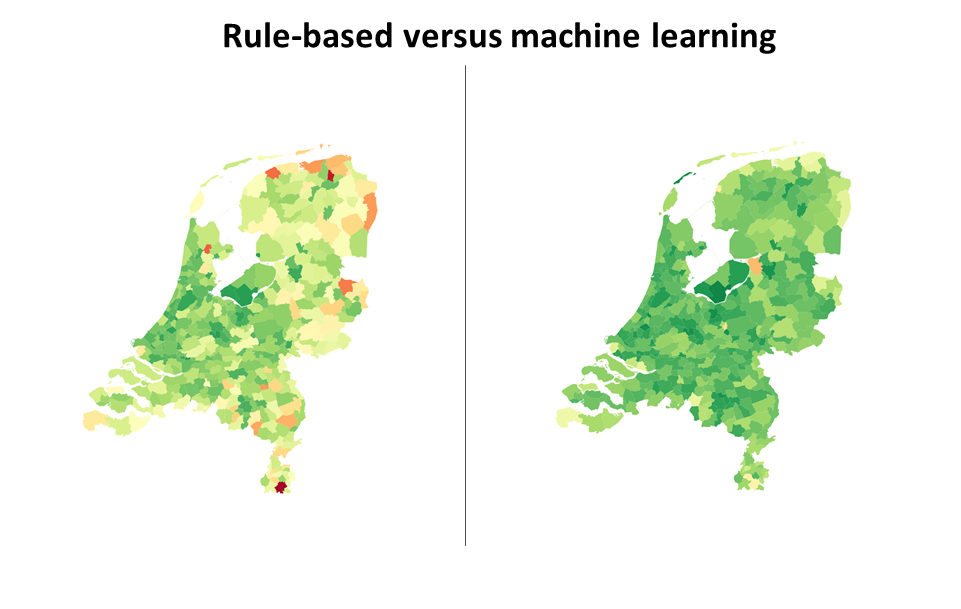

Applying the tree-based algorithm to the platforms its data and comparing its performance to the results generated by the rule-based algorithm confirmed the above intuition. Although the rule based algorithm was on par with (and sometimes even outperforming) the machine learning application for houses in densely populated areas, we saw the machine learning algorithm shine for houses in less populated areas (see Figure 1). Currently, the two algorithms are used next to each other, each for those types of houses they are likely to perform best for, so that we can reap the benefits of both approaches. This shows that machine learning methods do not always need to be a replacement, but can also be a great addition to existing solutions.

A down side of the machine-learning algorithm described above is that it only offers a point prediction: a single house price. However, for house seekers and sellers it is more helpful to know a range within which the house value is expected to lie. In our next blog we will explain how we dealt with uncertainty in house prices and how we constructed predicting confidence intervals for each house.

Figure 1: Average relative difference between predicted and actual house prices per municipality ranked worst (dark red) to best (dark green). Left: the results using a complex set of business rules. Right: the results when using a tree-based machine learning method.

Lorem ipsum dolor sit amet, consectetur adipiscing elit

Coltbaan 4C

3439 NG NIEUWEGEIN

+31 30 227 2961

info@vantage-ai.com

Follow us: