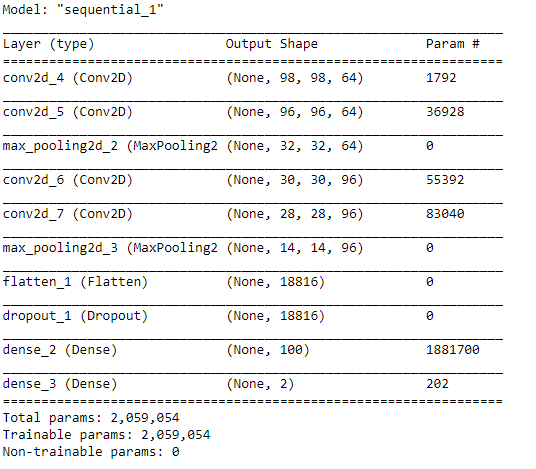

If you’ve worked with deep learning before, you might know about the virtues of GPU acceleration. If not, allow me to give you a short demonstration. In this scenario, I’ve copied a small convolutional neural network written in Keras from one of our weekly training sessions at Vantage AI. It can distinguish cats from dogs with reasonably bad accuracy, but it’s good enough for demonstration purposes. The network looks like this:

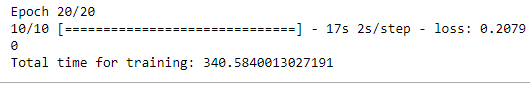

As you can see this network has a little over two million trainable parameters. Let’s try training this network for 20 epochs on 2000 images with a batch size of 150 using my standard installation of Tensorflow, along with recording the time it takes to train the model:

As you can see this network has a little over two million trainable parameters. Let’s try training this network for 20 epochs on 2000 images with a batch size of 150 using my standard installation of Tensorflow, along with recording the time it takes to train the model:

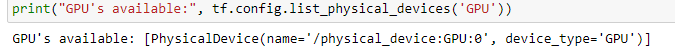

We’re not interested in the validation accuracy here (it’s 0.714, nothing to write home about). What I do want to draw your attention to is that training this network on a set of 2000 images (which is very little for a deep learning approach) took 17s per step, or a whopping 5:40 minutes, even though I’m using an AMD Ryzen 5600X, not a slow CPU by any means. Fortunately, we also have an NVIDIA RTX 3070 GPU installed in this machine. As a next experiment, I’ll switch to my Conda environment that has tensorflow-gpu installed. You can see Tensorflow has detected my GPU:

If you're interested in trying out tf-gpu for yourself, check out this link. Installing it and NVIDIA's CUDA drivers can be a bit of a challenge — especially on linux-based machines — but as we will see it's well worth the effort.

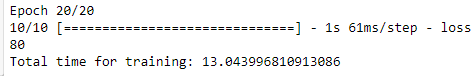

Now that we’re using the GPU-accelerated version of Tensorflow, we re-initialize and train the model in the exact same way as before and lo and behold:

13.04 seconds is what it took to train this model using GPU acceleration. That is 28 times faster. You can also notice that Tensorflow reports it takes 61 milliseconds to process each batch of 150 images, something that takes more than 2 seconds on CPU.

An NVIDIA RTX 3070

An NVIDIA RTX 3070

GPU’s are fundamentally different from CPU’s. There is a perfect analogy for why some calculations are so much faster on GPU’s that I will paraphrase to the best of my memory here. Imagine you have one group of 6 scientists with PhD’s in mathematics, and you have another group of 5888 14-year old kids. Your assignment is to find the solution to 50.000 different equations, but all in the order of e.g. 3+5, 18–2, 45–35. Which group do you think will be faster?

You could see your computer as a well-oiled corporation, in which the CPU would represent the C-level suite. It usually has from 4–8 cores that handle the delegation of computational tasks, memory management and everything that’s needed for your computer to run smoothly. These processors are great at doing fast, complex, long-running computations with high precision, which is why it’s perfectly doable for one of them to train most conventional machine learning models.

We'll get to how this is relevant to data science, don't worry.

We'll get to how this is relevant to data science, don't worry.

In this analogy, GPU’s are more akin to a division of a couple thousand lower-level employees. GPU’s were originally developed for graphics: pushing thousands (in 1996) to millions (nowadays) of pixels. This is a task that is highly parallellizable. When rendering a video game like Forza Horizon 5 in real time, there are hundreds if not thousands of sub-processes that all come together into one (admittedly pretty good looking) image.

Some of these processes, like computing the physics of individual suspension parts, tire grip or the AI of other drivers on the road, are quite complicated and not easily paralellizable, since the next computation depends on the outcome of the former. These calculations are therefore done by the CPU, since your CPU has 4–8 cores that are very fast and have tiny on-board memory caches that are much faster than talking to your RAM.

Graphics, however, are a different beast. Your 1978 Porsche 911 in Forza is made up of a list of 3D coordinates of vertices (which form polygons). Graphics cards were therefore developed to be very good at matrix multiplication and division on many of these vertices in parallel in order to convert these 3D coordinates to 2D on-screen coordinates. These computations are completely independent from each other, which makes them perfect for parallelization.

This is why GPU’s generally have a lot of cores, but they’re not as smart or fast as the ones in your CPU. To illustrate: the CPU in my computer has 6 cores that can run up to 4.6GHz, while my GPU has 5888 smaller cores that run at a much lower speed of 1.6GHz.

Along with this, GPU’s have their own working memory (VRAM) with much higher bandwidths than the RAM sticks that the rest of your system uses, as they’re directly integrated in the GPU itself instead of having to have data sent through your motherboard. This effect is also part of the reason why Apple’s M1 chips are so blazingly fast due to their system-on-a-chip approach where all components are unified in one chip, but I’ll leave that for a different blog.

Are you starting to feel how these properties translates to good performance in deep learning?

Let’s take two algorithms and compare them in terms of their training loop. The first is a supervised learning algorithm that you’re all familiar with: decision trees. When deciding how to split a node of a tree, your computer needs to compute the entropy and information gain for each possible split before it can make a decision. This is where the raw processing power of a CPU core comes in handy, as your CPU can power through these sequential computations very quickly.

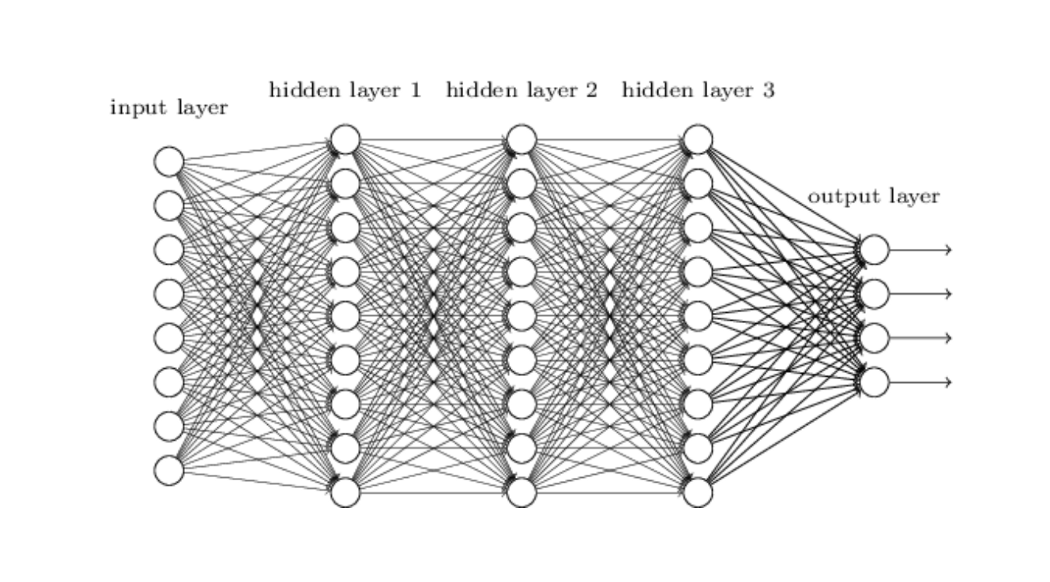

A small fully connected neural network architecture

A small fully connected neural network architecture

A neural network is a different beast, however. Overly simplified, a neural network is nothing more than a large graph of individual nodes and connections, all with specific weights. Propagating through this network is extremely simple; to compute the value of a node in hidden layer 1 you take the value of each node in the input layer, multiply it with the connection strength (weight) that connects it to the node in the next layer, and add that to the summed value of the next node. In other words, it boils down to millions of simple matrix multiplications, and in 2005 researchers were quick to figure out that the graphics card they used to play GTA: San Andreas after work was actually the perfect tool for this.

Back then, this wasn’t as easy as just pip install tensorflow-gpu, however. Dave Steinkrau et al. (2005) were among the first to train a two-layer fully connected DNN on a GPU. They had to perform an impressive piece of software engineering to do this, because GPU’s were highly specialized for actual graphics processing. This meant that they had to write the results of their calculations to actual pixel buffers, essentially tricking the GPU into thinking it was rendering images. This didn’t last long, however, because a very influential player was about to enter the market.

What really revolutionized the use of GPU’s for deep learning — and therefore greatly accelerated the deep learning revolution in general — was the introduction of NVIDIA’s Compute Unified Device Architecture. NVIDIA CUDA is an interface that allowed researchers to write arbitrary code in a C-like programming language, that can then be run on GPU’s. Writing code for GPU’s isn’t as straight-forward as just porting your code over to a different syntax, as the specific strengths of GPU’s (high memory bandwith, great parallelization) are difficult to harness and require a different way of thinking about software engineering and optimization.

We’re still talking 2010 here, and a lot has happened since then. NVIDIA has been fitting their GPU’s with additional dedicated tensor cores since the RTX series, which are cores that are even better at matrix multiplication but at the cost of accuracy. If you’ve used Google Colab or other cloud services for deep learning before, you might have also heard of TPU’s: Tensor Processing Units. These are processing units that lack the CUDA cores of GPUs and therefore need to be directly controlled by a CPU, but offer even more raw performance in terms of dumb matrix processing. These TPU’s aren’t something you’ll pick up at your local PC hardware store, but specifically geared towards the professional market, data centers and cloud providers.

I could write ten more pages about computer hardware and how it enabled the deep learning revolution, but I’m already straying too far from my expertise as a machine learning engineer and am even wondering if anyone is still reading this at this point. There are also a lot of technologies that deserve to be mentioned here. For example, AMD is now introducing GPUFORT as an open source alternative to CUDA, as they have finally realized they have missed the very profitable boat of enterprise deep learning applications. Another development that I’m extremely excited about is Apple’s recent move to the ARM architecture with their M1-chips, as Apple’ve also been working on their CoreML framework and different acceleration plugins for PyTorch and Tensorflow have been popping up recently.

The main takeaway of this story is that NVIDIA’s CUDA might be one of the most influential technologies you’ve never heard of: the introduction of CUDA kickstarted the re-popularization of deep learning of the 2010s. This inevitably lead to the AI revolution that we’ve experienced over the last few years; I’m not sure we would have been able to build self-driving cars with SciKit-learn.

There are a couple resources I used for this article, and one of them deserves a special mention in this blog: chapter 12.1.2 of Ian Goodfellow’s legendary book Deep Learning. The whole book is a must read for anyone working with deep learning, but this chapter was especially useful for this blog post.

If you’re interested in more stuff like this, you can follow me on LinkedIn and follow Vantage AI for similar content from our other MLE’s!

I'd like to thank Erik Jan de Vries, Emiel de Heij and Chris van Yperen for providing very valuable feedback.

Coltbaan 4C

3439 NG NIEUWEGEIN

+31 30 227 2961

info@vantage-ai.com

Volg ons: